Quix Delivers Low-Code/No-Code Platform for Building Data Pipelines

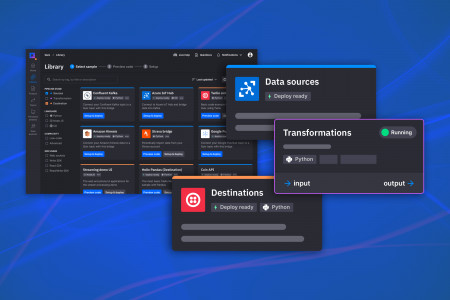

LONDON, February 16, 2022 (Newswire.com) - Data stream processing platform Quix today launched the first low-code/no-code (LCNC) interface for streaming data pipelines. Working with streaming data has been the sole domain of big tech with vast teams of engineers. But now anyone can manage data sources, transformations and destinations with a few clicks, using Quix's out-of-the-box data connectors and open source code sample library.

"This is a game-changer for companies that rely on redundant batch and ETL (extract, transform and load) systems," said Michael Rosam, CEO and co-founder of Quix. "We delivered a platform that simplifies stream processing so that more companies can benefit from modern data ops."

Other big data experts agree. Ali Ghodsi, CEO of Databricks, explained, "All the ETL that people are doing today, and all the data processing that they're doing today, could be simplified if you actually turn it into a streaming case, because the streaming engines take care of the operationalization for you."

The latest product release from Quix makes it easier and more efficient to build pipelines and workflows for ever-evolving streaming data sets, such as those from IoT and other digital devices.

As part of this release, Quix launched an open source library of code samples, such as Confluent Kafka, Twilio and AWS Kinesis connectors, that enable the community to benefit from a network of contributors. Underpinned by an open source SDK, these code samples help Quix users achieve their primary goal: to get a solution up and running quickly.

"Our experience building data applications with customers in industries such as mobility, finance and manufacturing revealed a massive unmet need," said Tomas Neubauer, CTO and co-founder of Quix. "Many companies are rapidly adopting Kafka for data streaming, but few people can use this complex infrastructure. We've solved this by creating a platform that simplifies working with data in Kafka."

Quix customer Control, which delivers race-winning telemetry solutions, used Quix to improve data speed and resiliency in its mobile IoT application in just two weeks. By ensuring its modems connect automatically to the best available network, it avoids a performance degradation of up to 23%. Plus, Quix removed a massive amount of manual configuration that used to fall on Control's engineers.

"The lightbulb moment happened when we realized how resilient Quix is," said Nathan Sanders, technical director and founder of Control. "Reliability is a major consideration when building mission-critical products. We've automated a new product feature, and Quix's resilient architecture gives us confidence that it won't fail."

Learn more about Quix's new workflow, open source code library and open source SDK in this in-depth technical blog post.

About Quix

Quix gives everyone the power to build efficient streaming data pipelines in a few clicks. Organizations use Quix to bring data-driven products to market faster, integrate ML and AI, and avoid the massive cost of data infrastructure.

Founded in the UK by Formula 1 engineers with deep data stream processing experience, Quix's platform is trusted by developers at McLaren, Deloitte, the National Health Service (UK), and across industries including mobility and logistics, financial services, gaming, and automotive. For a free trial and to learn more, visit https://quix.ai.

Media contacts

Press contact: Heidi Joy Tretheway, VP Marketing, Quix

Source: Quix